Holidays are a good time for cleaning up. For migrating an old on-premises file storage to the cloud, I used some tools and invested some time. See my scenario and the tools here.

The scenario

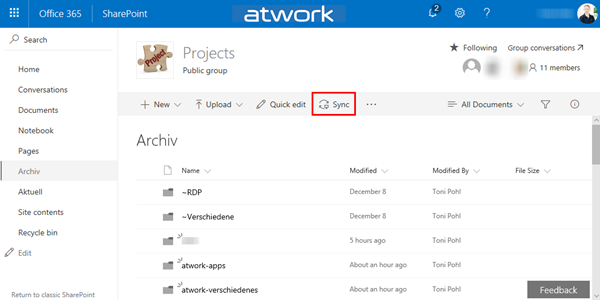

I had a Virtual Machine (I connected to remote via RDS from my holiday location into the company) with an iSCSI storage to a NAS system where old project files were stored. This storage was used over the last years. You can imagine ... the mess. To make it short: In that VM, the file storage was connected with a network drive P: (for Projects). With my work account, I have 1TB personal cloud storage. Since this should be an archive for my coworkers as well, I decided to use a SharePoint Online site (SPO). I created an Office 365 group - with a SPO for storing the documents - named "projects" and installed the OneDrive.exe client. Then, I created a new Document Library and synchronized that (empty) list to the local computer.

The issue

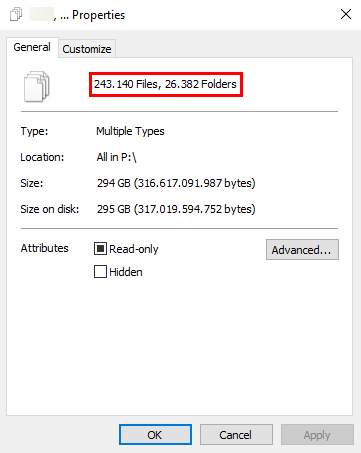

So far so good. So I could copy all files from my P: drive to the OneDrive storage with Windows Explorer (or with robocopy). Theoretically. The issue then was, that the projects included almost 250-thousands files and more than 25-thousand folders with about 300GB in total. BTW, the cost free tool GetFolderSize does a good job for showing an overview of files as well.

Agreed, this is not the best practice to synchronize with a SPO library...

Cleanup with tools

Besides the fact, that presumably most of the files are needless, I wanted to archive them anyway. So, I decided to manually check through the folders and to compress them to have one ZIP archive for a project within each customer folder. My compromise was to use 7Zip as packer-tool and a small PowerShell script to save some manual work.

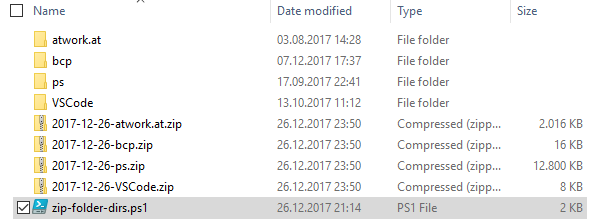

I created a zip-folder-dirs.ps1 file (see below) that packs every directory in the current path to a ZIP-file with the current date as prefix. The following screenshot shows the functionality: When started, the script packs all directories into one archive file: Directory "atwork.at" gets packed into "2017-12-26-atwork.at.zip" and so on.

Since I needed to look over the project files (and honestly deleted a lot...), I copied the small PowerShell script file into each directory and executed it. This method helped to half-automate my work. Then, I deleted the original folders ("atwork.at", etc.) and the .ps1 file, so that just the ZIP files were left in each customer folder.

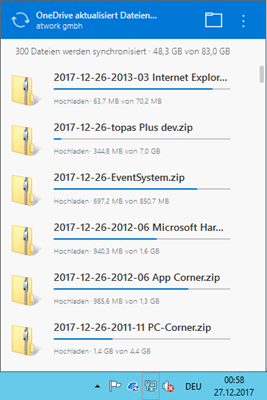

Let OneDrive.exe do the sync

Then, I moved the (smaller) ZIP files into the OneDrive for Business folder. Remember, it's good to move and delete stuff that's done, so not to get confused what content is already in the cloud and what not if you continue some days later. Since this takes hours to synchronize (to finish), doing that on a Remote Computer is perfect and I now can go to bed and check the results tomorrow.

Hint: Check out Restrictions and limitations when you sync files and folders - there's a 15GB file size limit. If ZIP files are larger than that size, you need to split them into smaller files (or as I did, re-organized them into more directories and packed that with the script). With the ZIP files-method as described here, usually the max. 400 characters path length in OneDrive can be avoided as well.

The benefit of using SharePoint Online

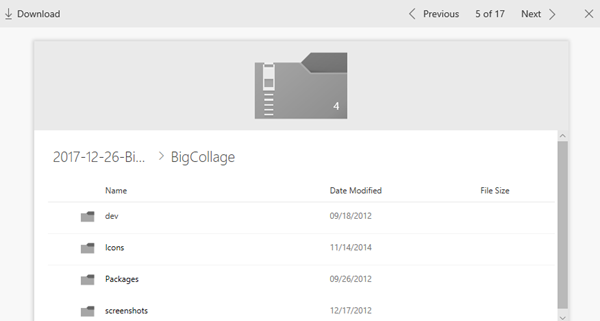

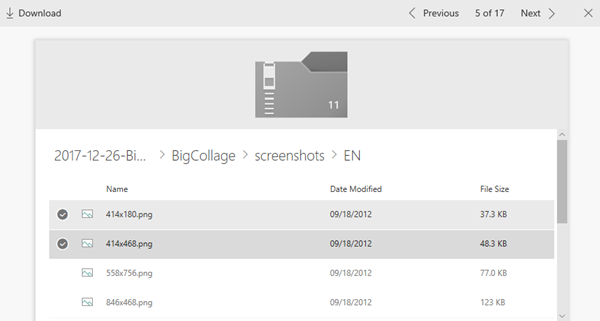

Since SPO can work with ZIP files and shows the content online, I think this is a fair compromise. This screenshot shows the content of an uploaded ZIP file.

When opening a ZIP file, you can navigate through the files, select multiple items and download them easily (or the whole ZIP file, of course).

Fair enough for a packed archive, or?

The PowerShell script

Even if this cleanup might not be the most elegant way, this method worked well for me. When executed, it runs through all directories of the current path and runs 7Zip to pack them into a single archive file. So, here's my quick zip-folder-dirs.ps1 for running through the hundreds of directories in my project storage.

# ZIP all folders in the following directory, atwork.at, Toni Pohl, 26.12.2017

# $filePath = 'P:\Projects\CustomerA'

# or use the current path:

$filePath = (Get-Item -Path ".\" -Verbose).FullName

if (-not (Test-Path "$env:ProgramFiles\7-Zip\7z.exe")){

Write-Warning "$env:ProgramFiles\7-Zip\7z.exe needed"

} else {

Write-Warning "$env:ProgramFiles\7-Zip\7z.exe found."

$cmd = ("$env:ProgramFiles\7-Zip\7z.exe")

# get directories only (without -Recursive)

$folders = Get-ChildItem -Path $filePath -Directory | where {$_.mode -match 'd'}

$i = 0

foreach ($subfolder in $folders) {

$timestamp = get-date -f yyyy-MM-dd

$source = $subfolder.fullname

$target = "$filePath\$timestamp-$($subfolder.name).zip"

# https://www.dotnetperls.com/7-zip-examples

# -mx1 "copy" mode -mx5 "normal" -mx9 "ultra compression"

& $cmd "a" "-mx=5" "$target" "$source" "-tzip"

$i++

Write-Output "$i. $filePath\$timestamp-$($subfolder.name).zip"

}

Write-Warning "$i directories zipped in $filePath"

}

Adapt it as needed. I hope this short article helps for cleaning up in your environment with a little help.